Production-Grade LLaMA 3.2 Multimodal Interface Implementation

The LLaMA 3.2 Multimodal Web Interface represents a comprehensive solution for deploying Meta’s advanced multimodal language model through Ollama platform infrastructure. This production-ready system provides seamless integration of text and image processing capabilities enabling researchers and developers to implement sophisticated AI applications with enterprise-grade reliability and performance optimization.

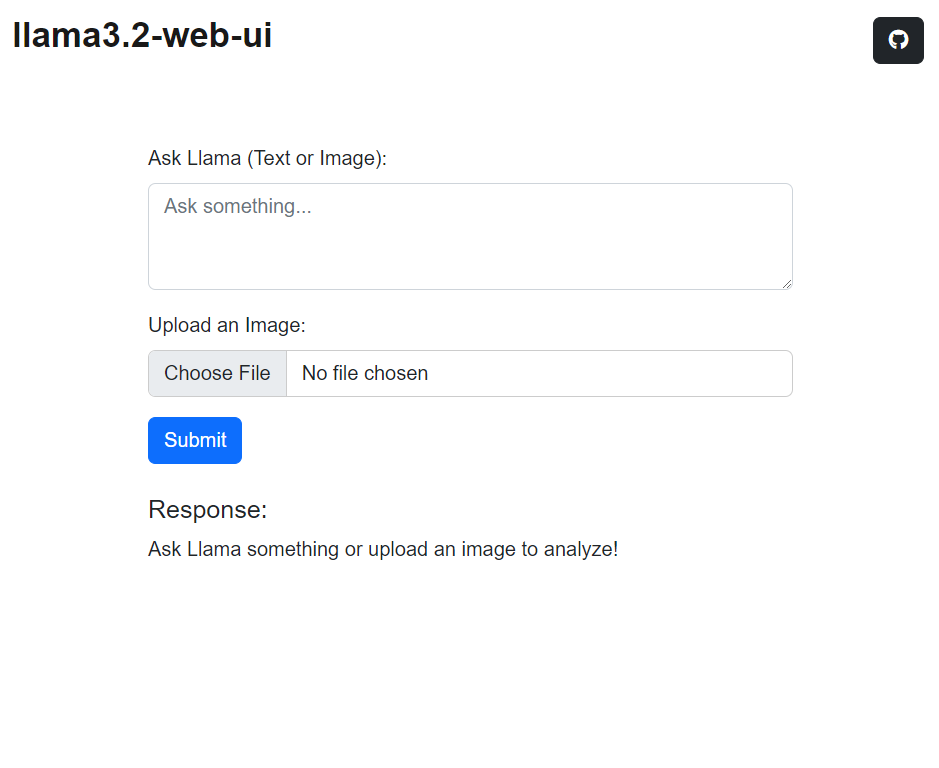

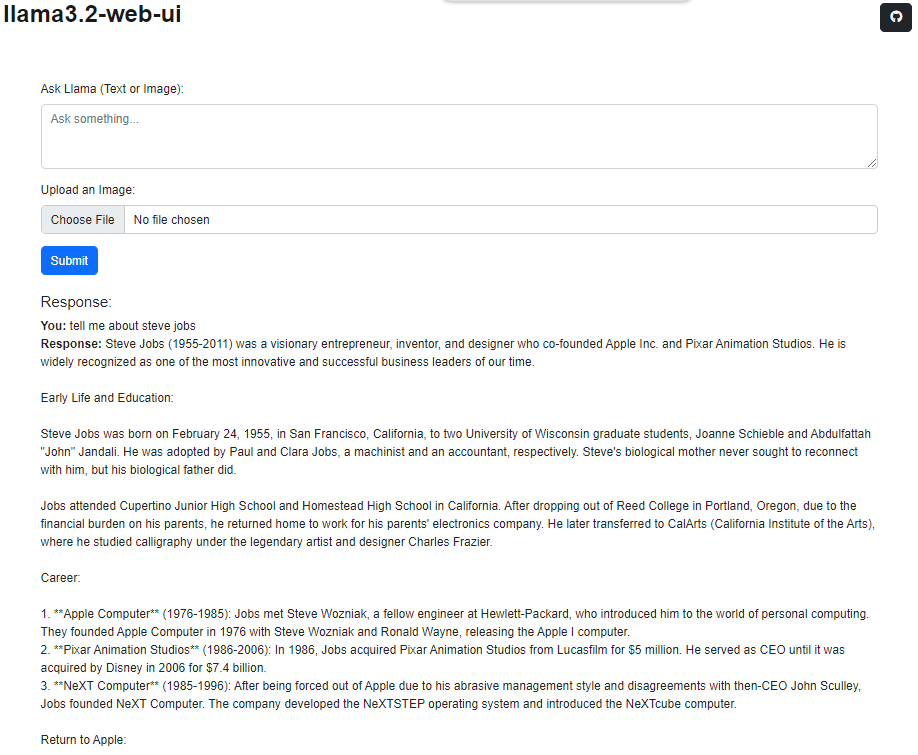

The platform demonstrates robust multimodal functionality supporting concurrent text and image inputs with contextually aware response generation. Users access advanced AI capabilities through intuitive web interface design supporting code generation visual analysis and comprehensive explanatory outputs. The architecture ensures scalable deployment for research environments and production applications requiring reliable multimodal AI processing.

System Validation: Comprehensive testing completed on Ubuntu Linux distributions with cross-platform compatibility verification for enterprise deployment scenarios.

Enterprise Feature Architecture

- Advanced Multimodal Processing: Comprehensive text and image input integration with context-aware response generation and cross-modal understanding capabilities

- Professional Code Formatting: Syntax-highlighted code blocks with comprehensive documentation and detailed implementation explanations for production environments

- Developer Integration Tools: One-click code snippet extraction with clipboard functionality enabling seamless integration into development workflows and production systems

- Cross-Platform Responsive Design: Optimized interface architecture supporting desktop workstations mobile devices and tablet environments with consistent user experience

- Comprehensive Output Management: Multi-format content generation including text analysis image processing and multimedia response capabilities powered by LLaMA 3.2 architecture

Production System Installation and Configuration

Deploy the LLaMA 3.2 Multimodal Web Interface through the following systematic configuration process:

Repository Acquisition and Setup

Secure the production-ready codebase through repository cloning:

git clone https://github.com/iamgmujtaba/llama3.2-webUI

Project Environment Configuration

Establish the development environment and navigate to project directory:

cd llama3.2-webUI

Ollama Platform Installation

Ensure Ollama infrastructure is properly configured on your system:

- Download and install Ollama from official distribution

- Verify installation through system validation commands

Application Deployment

Initialize the production server using the provided deployment script:

bash run.sh

The system will automatically initialize the local development server and launch the application interface at http://localhost:8000 endpoint for immediate access and testing.

Production System Operations

Interface Interaction Protocols

The production interface provides comprehensive interaction capabilities:

- Text Input Processing: Submit queries and prompts through the input interface with immediate processing and response generation

- Image Upload Integration: Advanced image processing capabilities supporting visual analysis content extraction and multimodal reasoning applications

Response Management and Analysis

- Professional Text Formatting: Clean structured response presentation with optimal readability for technical and non-technical users

- Advanced Code Syntax Highlighting: Production-grade code presentation with language-specific highlighting supporting multiple programming languages and frameworks

- Comprehensive Multimodal Analysis: Integrated text and visual processing capabilities providing detailed content analysis image annotation and contextual explanations

System Validation Screenshots

Professional Development and Contribution Guidelines

Community contributions and collaborative development initiatives are welcome through established GitHub workflow protocols and comprehensive code review processes.

Licensing and Distribution

This project operates under MIT License framework. Complete licensing documentation and usage terms are available in the LICENSE file within the project repository.

Project Repository: This implementation is actively maintained on GitHub. Access comprehensive documentation technical specifications and development resources through the LLaMA 3.2 Multimodal Web UI Repository for ongoing updates and community support.